Proposal

Raymarching and Fractal Microgeometry

In this project, we plan to implement the 3D raymarcher presented in lecture to render scenes described by distance fields. Additionally, we hope to use the raymarcher to render implicitly represented fractals on top of traditional explicit triangle meshes to add additional surface details. The goal of this hybrid rendering technique is to utilize the traditional triangle rasterizer to give an object its overall shape, and then harness the raymarcher’s power to render implicit, structured geometry (like fractals) to add highly detailed microgeometry on top of the basic mesh.

Team members: (SID)

- Peter Manohar (26773035)

- Brian Lei (26442442)

- James Fong (3032171897)

Problem Description

Triangle meshes are often favored in real-time 3D renderers because of the wide range of geometries they can easily represent and the speed at which they can be rendered by a modern GPU. However, triangle meshes are ill-suited for geometries with highly detailed surfaces, where surface features commonly take up less than one pixel of screen space. For example, trying to use a single triangle mesh to represent a gravel path by modeling every individual rock is prohibitively memory intensive and typically introduces aliasing artifacts. Numerous techniques have since been developed to handle surface microgeometries, such as normal mapping and displacement mapping with tessellation. In this project, we hope to develop a new approach to rendering surface microgeometries using distance fields.

Distance fields are implicit representations of geometry, where field values are the distances from that point in 3D space to the nearest point on the underlying surface. This representation can compactly store highly detailed implicit surfaces as fractals. By using fractals to represent microgeometry, we can greatly reduce memory usage and decrease rendering times.

Raymarching is a rendering technique designed specifically for rasterizing a distance field in screen space. Unlike raytracing, a raymarcher does not compute ray-object intersections. Instead, it advances the t-parameter of the ray in discrete steps until it transitions from outside an object to inside the object. Raymarchers are proven to be able to render complex scenes in real-time.

Drawing inspiration from the microfacet model and bump/displacement mapping, we wish to efficiently render such geometry by separating the rendering pipeline into “high” and “low” frequency geometry. Our idea is to use triangle meshes to take care of “low frequency” geometry, and then use raymarched fractals to add further “high frequency” details (overhangs, high-frequency “spikes“, foliage etc) on top of that geometry. Through our hybrid technique, we hope to simplify the modeling of these complicated surfaces by requiring only a model of the low-frequency geometry (thus lowering the polygon count), and reduce aliasing artifacts by using raymarching to render the high detail geometry. Ideally, through these optimizations, we would like to render foliage and other highly detailed microgeometry in (near) real-time in a modern rendering pipeline.

Goals and Deliverables

Part 1: Raymarcher (what we plan to deliver)

The first part of our project involves implementing a raymarcher as way of speeding up the standard raytracing algorithm for (near) real-time rendering. The implementation can be split into several steps: (1) constructing a ray passing through a given pixel, (2) marching the ray to find an intersection, (3) approximating the surface normal vector at the intersection point, (4) shading/surfacing, and (5) lighting and color correction. Raymarching naturally entails various adaptations to rendering techniques we discussed in class. We should be able to implement raymarching as it is similar to raytracing (which we have already implemented) and the algorithm is well-known and standard. Here, our primary goal is to be able to render scenes represented using distance fields and triangle meshes. We should be able to use our raymarcher to reproduce the raymarched images found on mandelbulber.com and http://www.iquilezles.org/www/index.htm, and maybe even model some of our own scenes. Here, the quality of our raymarcher will be determined by the quality of the images we render with our raymarcher, as compared to the sample images found online.

Part 2: Fractal Microgeometry (what we hope to achieve)

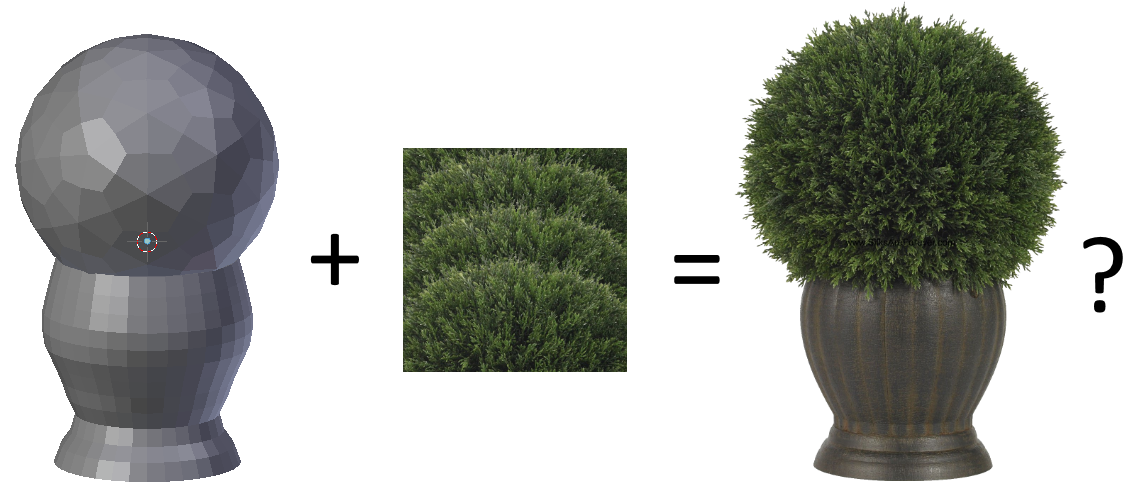

In addition to the basic raymarcher, in the second part of our project we hope to add highly detailed microgeometry to the surfaces of triangle meshes using fractals. Fractals are easy to render using a raymarcher because their (infinite) geometry can be efficiently represented using a distance field. Our idea is to use triangles to model the main geometry of the object (which is “low frequency”) and then use raymarching and fractals (which work well together) to render “high frequency” details on top of the main geometry. The image below gives an example of what we are hoping to achieve. The middle and right images are taken from websites and are not rendered.*

Ideally, we would like to use our techniques to render an image like the one on the right above. If everything goes extremely well and we can accomplish the above in real-time, then we might try to do some small animation (for example, make the bush’s leaves move) by perturbing the fractal pattern with time. Here, the quality of our output will be determined by the final quality of the rendered scene, e.g. we would like the fractal-generated foliage to look somewhat realistic.

Schedule

Week of April 9: Get the raymarcher (part 1) working completely (i.e. complete the basic plan) We should be able to render images from http://www.iquilezles.org/www/index.htm#. Have a demo that uses the raymarcher to render scenes from distance fields.

Week of April 16: Do the Milestone deliverables. Begin implementing the fractal microgeometry (part 2, the aspirational plan). Ideally should have some preliminary images for part 2 here. Have a small demo with some fractal microgeometry.

Week of April 23: Finish the fractal microgeometry. Find interesting scenes to render. If time, animate the microgeometry by perturbing it with time, and make a “large” demo scene with many models.

Week of April 30: Put together a visually appealing demo. Put together project presentation materials. Finish final project deliverables.

Resources

References

- See https://mandelbulber.com and http://www.iquilezles.org/www/index.htm for examples of images rendered with raymarched distance fields.

- See http://www.iquilezles.org/ for many articles explaining various techniques for rendering with raymarching and distance fields.

- See https://www.microsoft.com/en-us/research/publication/generalized-displacement-maps-2/, which describes a technique for transforming eye rays to the space directly above a surface triangle.

- See https://www.researchgate.net/publication/234774961_Plants_fractals_and_formal_languages for a paper on how to procedurally generate plants from fractals.

Computing Platform

We plan to repurpose the project 2 (Mesh viewer) skeleton for windowing, input management, and OpenGL initialization.

We plan on using OpenGL for most of the implementation, but we will borrow elements from our previous class projects as needed. For hardware, we will use our own computers and the hive machines.

*Image of potted plant taken without permission from silksareforever.com